A Technical SEO Audit is a comprehensive evaluation of a website’s technical components and how they impact search engine optimization (SEO). It’s a critical process for any website owner looking to improve their online visibility and drive more organic traffic. This audit helps to identify any technical issues that could be hindering the website’s ranking on search engines and making it difficult for users to find.

The objective of a Technical SEO Audit is to provide a thorough analysis of the website’s technical infrastructure, identify potential issues, and offer actionable recommendations to fix them. This can include issues such as broken links, website speed and performance, mobile-friendliness, crawlability, and much more.

By conducting regular technical SEO audits, website owners can ensure that their website is optimized for search engines and is providing the best possible user experience.

Introduction

A technical SEO audit is a vital aspect of optimizing your website for search engines. It helps in identifying technical issues and fixing them to improve the performance of your site. The purpose of conducting a technical SEO audit is to check all the technical, content and links-related issues to improve your Google rankings.

1. Align SEO with Your Overall Marketing Strategy

The first step to conducting a successful technical SEO audit is to align your SEO strategy with your overall marketing plan. To design a robust SEO marketing strategy, you must:

Use relevant and long-tail keywords

Follow all on-page factors as per search engine guidelines

Create quality content that is relevant and valuable to your audience

2. Check the Site’s Crawlability

Ensure that the search engine bots can crawl your website and index your pages properly. You can use tools such as Google Search Console or Screaming Frog to check your website’s crawlability.

3. Verify the Site’s Indexability

Check if your website pages are being indexed by Google. You can use the “site:” operator in Google to search for your pages and see if they appear in the search results.

4. Fix Broken Links

Broken links can negatively impact your website’s user experience and ranking. Use tools like Broken Link Checker or Screaming Frog to find and fix broken links on your site.

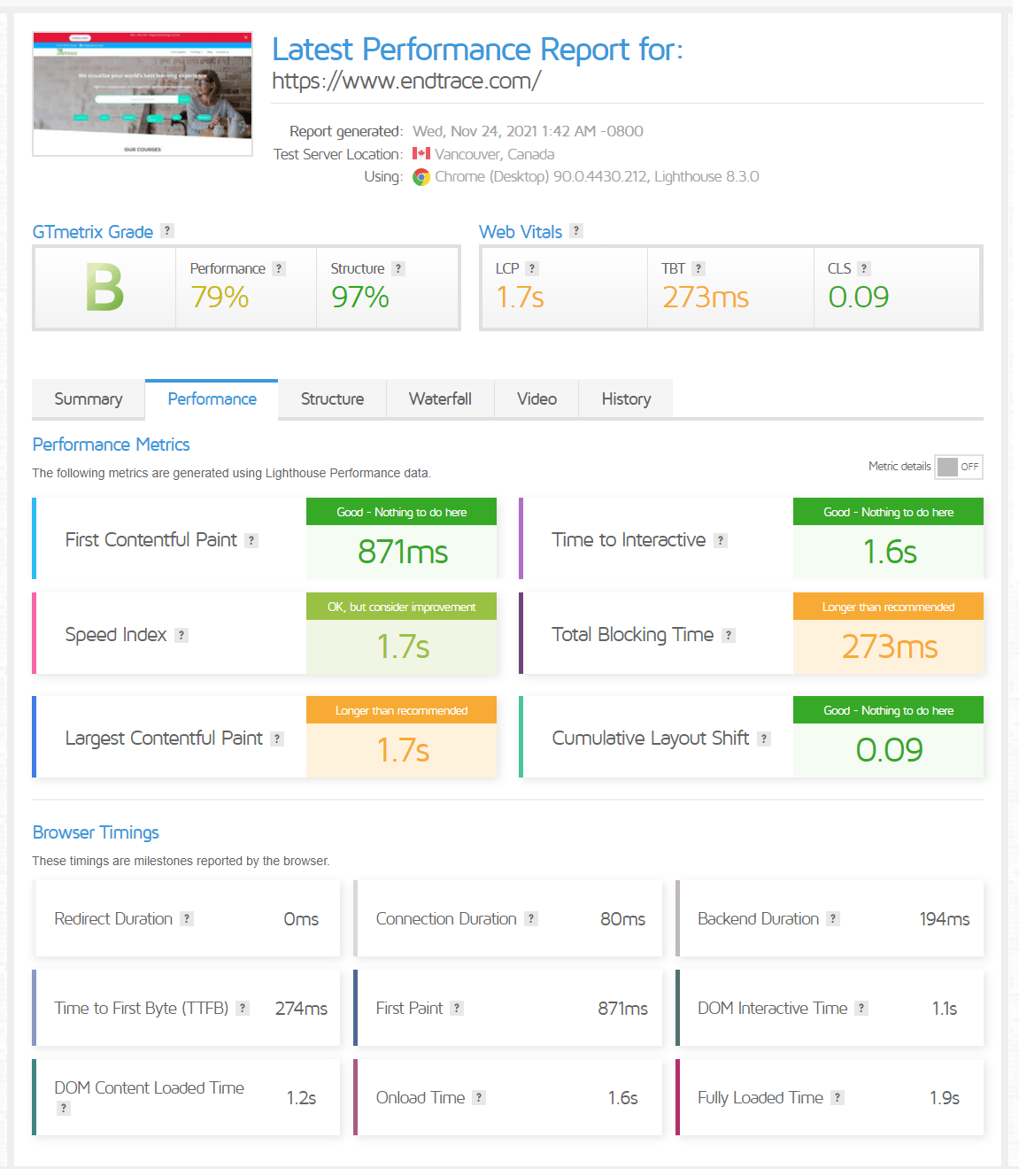

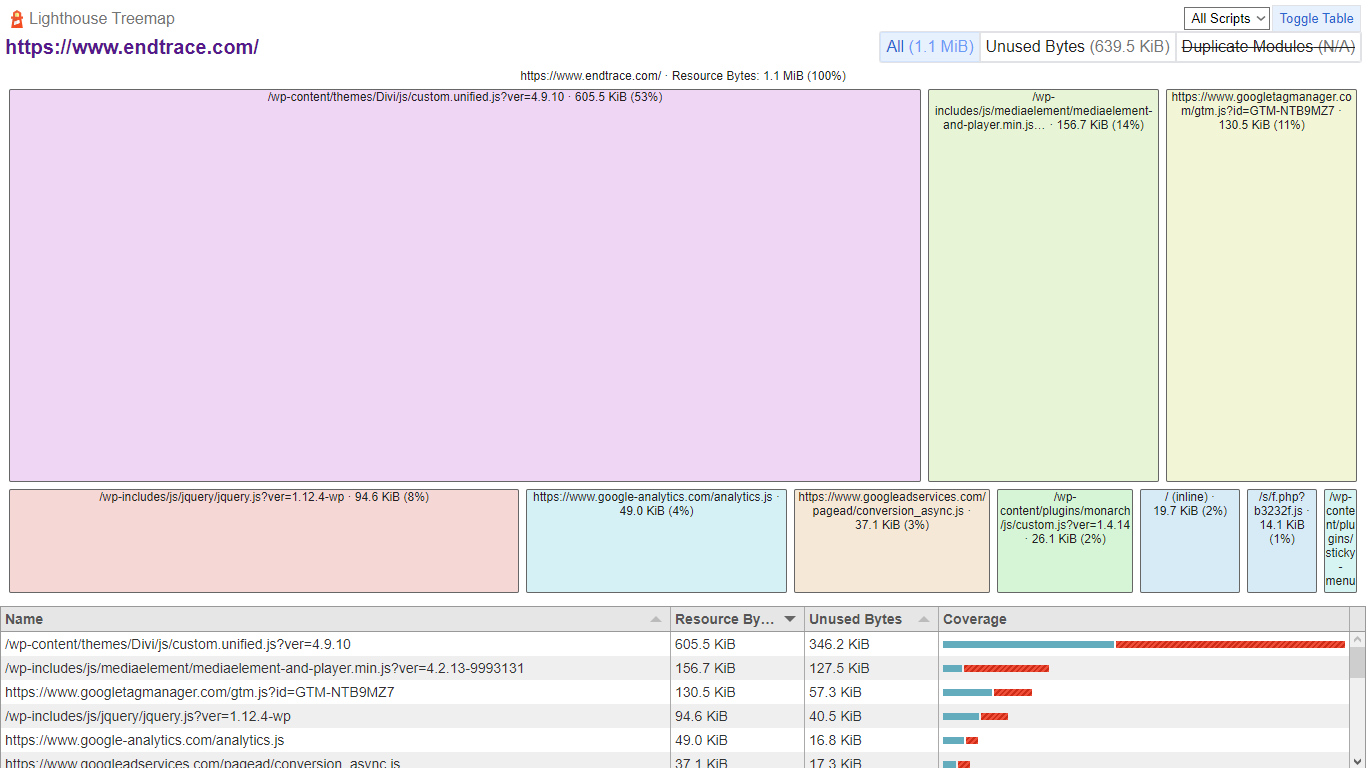

5. Monitor Site Speed and Performance

Site speed and performance are crucial for a good user experience and for search engine ranking. Check your website’s load time using tools like GTmetrix or Google PageSpeed Insights.

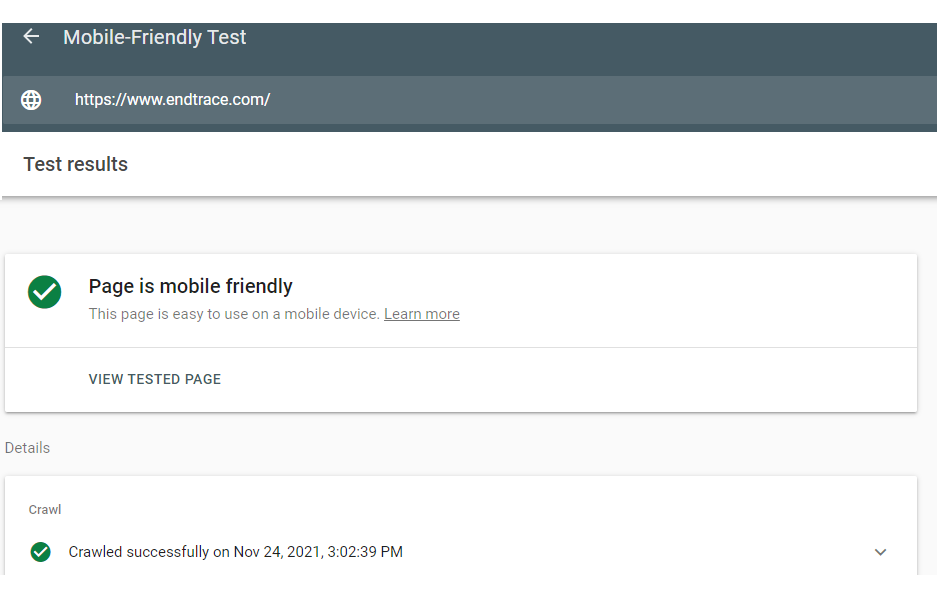

6. Optimize for Mobile

With the increasing number of mobile users, it’s crucial to have a mobile-friendly website. Use Google’s Mobile-Friendly Test to check if your site is optimized for mobile devices.

7. Check the URL Structure

Ensure that your website’s URLs are well structured, easy to understand, and follow the best practices for SEO.

8. Ensure Proper Use of Header Tags

Header tags (H1, H2, etc.) are important for structuring content on a page and improving its readability. Make sure your pages use header tags correctly and consistently. Find SEO Guide: Easy link building strategies to get high Quality BackLinks

9. Check for Duplicate Content

Duplicate content can harm your website’s ranking. Use tools like Siteliner to identify and remove duplicate content on your site.

10. Check the Robots.txt File

The Robots.txt file is used to control which pages search engine bots can crawl. Check if your Robots.txt file is set up correctly and doesn’t block important pages from being crawled.

11. Monitor and Fix crawl errors

Check for crawl errors using Google Search Console and fix any that are impacting your website’s ranking.

12. Check for Canonicalization Issues

Canonicalization is the process of indicating the preferred version of a web page. Check for canonicalization issues and fix them to avoid any confusion for search engine bots.

13. Optimize Image Size and Alt Text

Optimizing images by compressing their size and using descriptive alt text can improve your website’s load time and accessibility for users with visual impairments.

14. Check for Sitemap and Submission

A sitemap is important for search engines to crawl your website effectively. Make sure you have a sitemap and submit it to Google Search Console.

15. Monitor and Improve Website Security

Website security is crucial for a positive user experience and search engine ranking. Monitor and improve your website’s security by using tools like SSL, firewalls, and regular backups.

A technical SEO audit should evaluate the on-page optimization techniques of each web page to ensure that it is optimized for search engines and users.

Some of the SEO Audit Tools You Might need while performing SEO Audit:

• Google Page Speed Insights

• Google Structured Data Testing Tools

• Google Analytics

• Google Search Cansole

• Screaming Frog

• SpyFu

• Pingdom.

• PageSpeed Tool.

16. Analyze and Study Keywords and Organic Traffic

Keywords are crucial for website SEO and need to be carefully analyzed and selected. Use long-tail keywords with high search volume and low competition. The majority of website traffic depends on the keywords used. Research keywords using both paid tools and the free Google Keyword Planner, and finalize the list.

17. Learn from your Competition

Analyze the keywords used by your competitors to find untapped search terms. Consider keywords that they are targeting but you are not. On-page improvements such as content optimization and header tag updates can lead to significant increases in organic traffic.

18. Analyze and Optimize Internal Links

Review and optimize internal links to avoid broken links. Use Google Search Console’s link tab to find and improve internal links. Improve your backlink strategy by building real backlinks from relevant sites. Avoid bulk link providers and focus on natural link building processes. Steal backlinks from high-ranking competitors or find relevant websites manually.

19. Track Results

Regularly track your site audit results to know what changes have the most impact on your website. Use tools like SpyFu for easy rank tracking.

20. Stick to the Basics

Great SEO is about consistency and following basic practices in a structured way. Frequently conduct technical SEO site audits, learn from results, and make improvements for better performance and increased organic traffic. Comprehensive guide on how to perform a technical SEO audit for a website.

Finally Check all Track your site audit results

If you didn’t track what happened after you implemented certain changes on your website, it would be like operating with a blindfold. You wouldn’t know what you should keep doing or stop doing.

Luckily, SpyFu makes rank tracking easy. Just open the tracking dashboard and you can see your historical ranks for any keyword.

Finally Stick to the Basics and Generate SEO Audits Reports Regularly, Great SEO is all about consistency and using basics in structured way ,Frequently run Technical SEO Site Audit put in good practice, learn and practice for better performance which helps to improve your websites organic traffic.

Recommend to Read:

Learn SEO — Digital Marketing from Industry expert with best Practice

SEO Guide: Easy link building strategies to get high Quality BackLinks

Essential SEO Strategies for Recruitment website: SEO Guide

Useful Automation Technologies we used for Digital Marketing

Get Digital marketing Training to influence SEO Keywords in SERP

Best SEO – Digital Marketing Course training with Live Projects

View All SEO, Digital Marketing Programs

Digital Marketing

Full Stack Digital Marketing Course for Business Owners, Students

SEO Internship

This Internship Program Designed for SEO Freshers and Students

Internship on Digital Marketing

This Internship Program designed for Digital Marketing Students

Related Articles

Expert Level Debugging CUDA Failures on Windows: GPU Driver, Memory Transfer

Debugging CUDA and OpenCL Failures on Windows: GPU Driver, Memory Transfer, in Real Projects In many C and C++ development environments, code...

Endtrace Training Provides Free GenAI Engineer Projects for Real-World Skills

What is Endtrace Training? Endtrace Training is a practical, project-driven learning platform designed to help individuals transition from...

Expert C / C++ Debugging for Multithreading, Win32 Driver Code in Real Work | Task Based Support

C / C++ and VC++ Win32 Issues in Real Systems: Multithreading, Driver Execution, and Low-Level Debugging C and C++ are widely used in system-level...

Hire Senior C, C++ developer | Consultant for debugging, performance issues

C / C++ Expert Consultant for Debugging, Performance Optimization, and Task-Level Support C and C++ development tasks often involve complex...

Best Generative AI Projects for Students for execution and practice

Want to Build Generative AI Projects but Don’t Know Where to Start? You’ve explored AI tools, experimented with prompts, and maybe even followed a...

Generative AI Integration in Digital Marketing Platforms: Next Era of Marketing Automation

Generative AI integration is transforming digital marketing platforms from automation systems into intelligent marketing engines. An in-depth...